For years, digital transformation has mostly been about modernizing how the IT function works.

Organizations moved away from waterfall. They adopted agile delivery, lean thinking, product organization, cross-functional teams, and scaled coordination models that could help many teams move in the same direction. That shift was real, and it mattered. It created shorter feedback loops, stronger trust, and a much better connection between business need and software delivery.

In the best organizations, leaders and end users learned to trust iteration. Delivery teams learned the business. Product development stopped being a one-way handoff and became a repeated cycle of shipping, learning, and improving.

But those models were all built around one basic constraint: software delivery was still mostly a human translation problem.

Need had to be discovered, interpreted, rewritten into tickets, prioritized, designed, implemented, tested, released, explained, and supported by people at every step.

That is the constraint AI is starting to break.

The models that got us here

We should be careful not to dismiss the delivery models of the past twenty years. Agile, lean, product organization, cross-functional teams, Team Topologies, matrix structures, virtual organizations, and frameworks like SAFe or LeSS were not empty management fashion. They were serious attempts to solve real coordination problems.

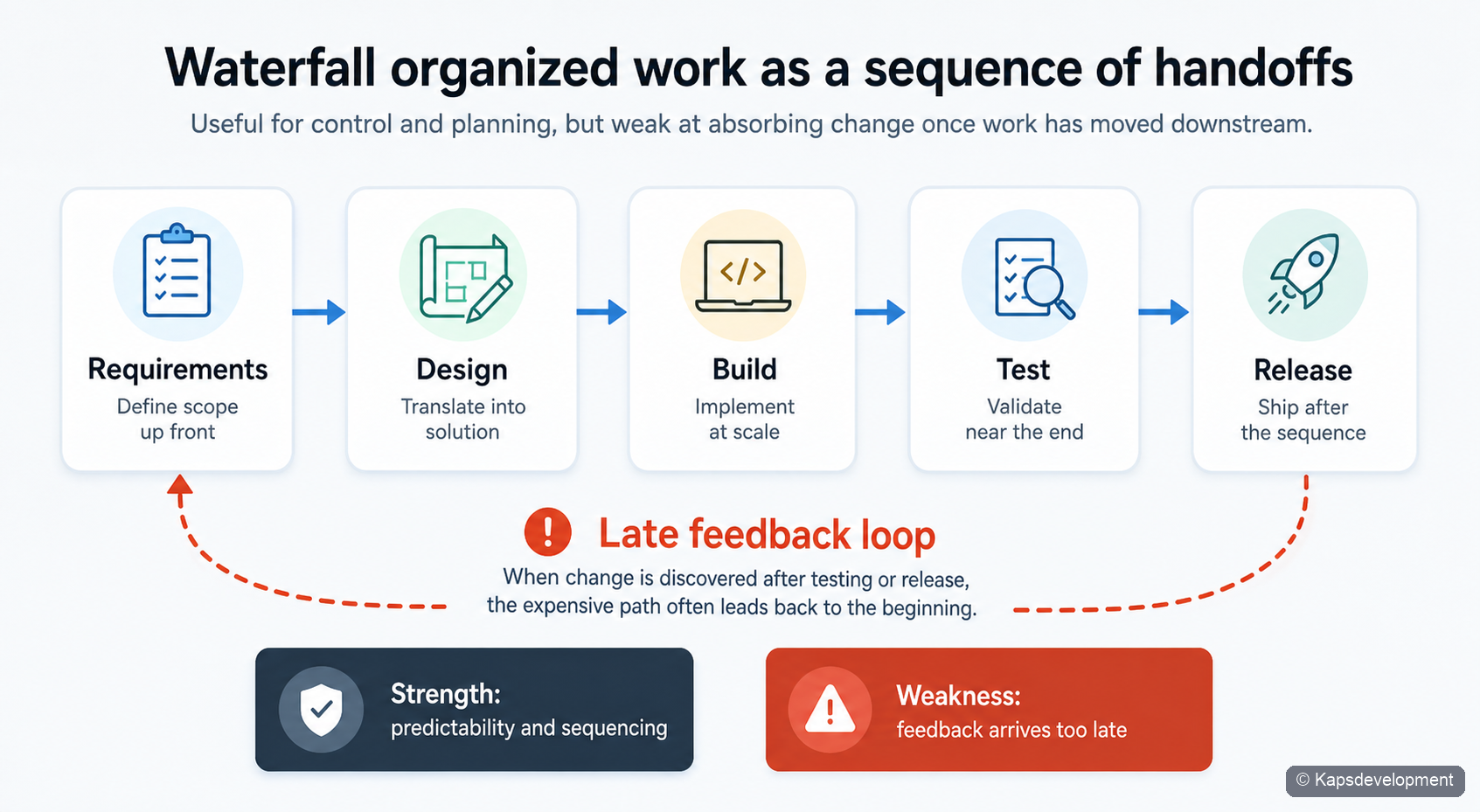

Waterfall tried to create predictability through sequence.

Figure 1. Waterfall optimized for orderly phases, but feedback arrived late and change was expensive.

Scrum and agile delivery improved this by turning software work into a loop rather than a line. This allowed for much shorter iterations and feedback loops, making sure that no “big surprises” arrived at the very end of a long waterfall project.

A common theme shared between waterfall project management and agile development with for instance scrum is that both models tries to handle risk. Waterfall project management tries to completely estimate risk, and with detail plan for it. Agile software development tries to move risk from time/cost into scope instead. This allows for these shorter iterations and feedback loops that normally also results in better delivery on scope as well. Why? Because the need is better understood during the process. Heck… often the need even changes while the development process is still ongoing.

Figure 2. Scrum shortened the cycle by making planning, delivery, review, and learning repeatable.

Product organization pushed ownership closer to value creation. Instead of temporary projects, organizations started building around products and capabilities that needed to keep evolving. Recognizing that in big organizations it is actually a healthy sign if needs change over time, is a leadership skill often forgotten. Done incorrectly, top managers would want controllable outcomes based on a waterfall plan, so that every detail could be completely monitored. The downside of this is that it ignores, or even worse, does not allow for changing work processes over time.

That is contradictory to what top managers should wish for, because changing work processes is normally a sign of an organization in continuous improvement mode. Changing work processes also mean changing needs for digital transformation. Hence why the agile software development and product organization has clearly won the race.

Figure 3. In practice, many organizations adopted product organization in a lighter form built around durable cross-functional teams close to the business need.

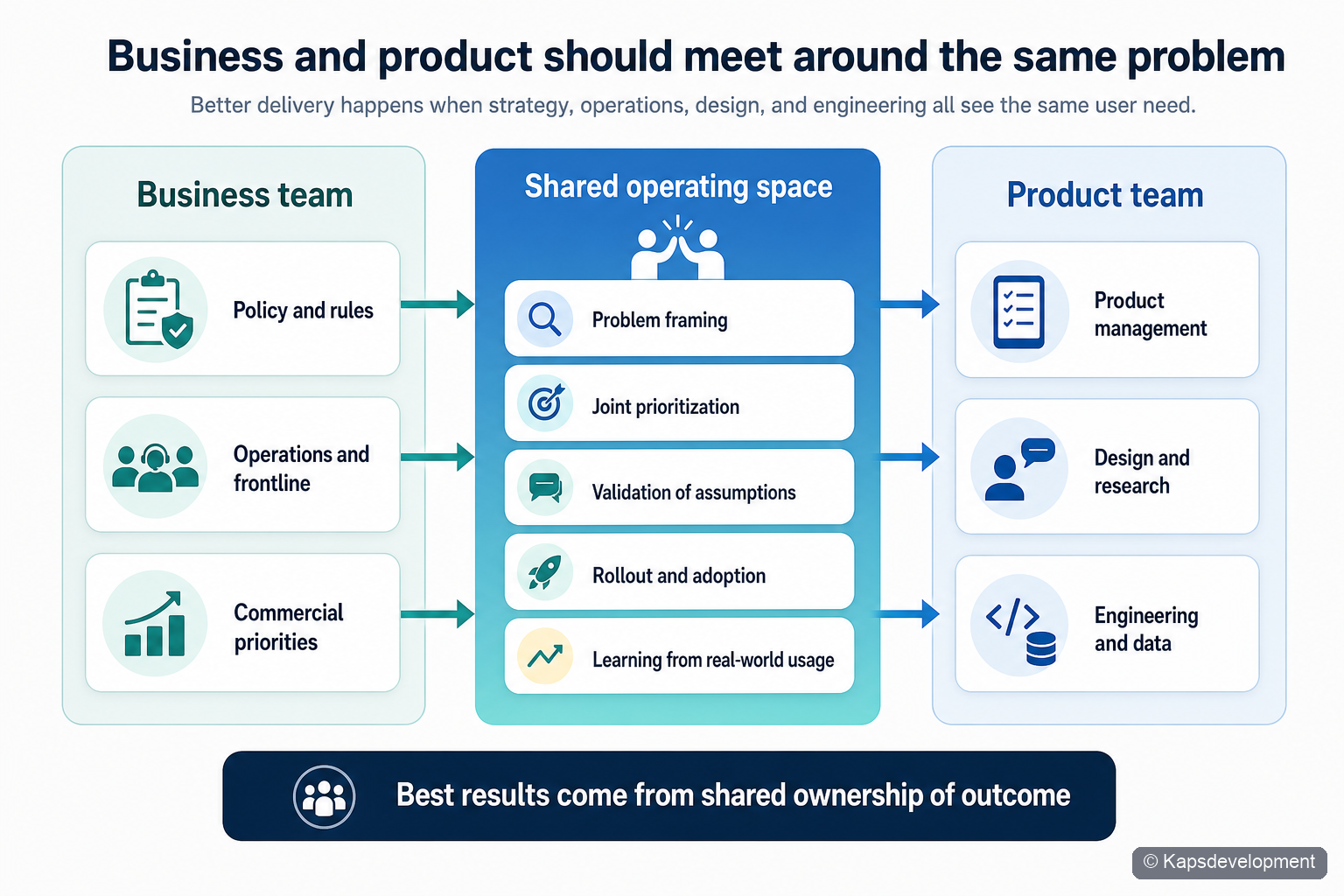

And where complexity increased, organizations created rituals and structures to help business and delivery work closer together.

Figure 4. Better products usually emerge when business and product teams share framing, validation, and rollout.

At larger scale, they layered these patterns into broader coordination models.

Figure 5. Official Essential SAFe 6.0 visual. Source: Scaled Agile Framework posters. Used unmodified. © Scaled Agile, Inc. SAFe and Scaled Agile Framework are registered trademarks of Scaled Agile, Inc.

All of this made sense in a world where most analysis, writing, coordination, and implementation had to be done manually.

That is no longer the world we are entering.

The first two AI waves are already visible

The first wave is easy to see because it hits work that is highly repetitive and language-heavy:

- process knowledge

- project administration

- content production

- documentation and summarization

The second wave is just as important:

- service desk

- customer support

- first-line guidance

- introduction to standard processes, systems, and policies

If you run a service desk today, it is not enough to simply ask your employees to “start using AI.”

The first response increasingly needs to come from AI.

That is not because people do not matter. It is because large language models are now good enough to resolve a very large share of repetitive first-line questions faster, more consistently, and at any hour. If the user is going to receive a standard answer anyway, it often feels worse when it comes from a human who never really understood them than from a chatbot they already expected to handle “as a chatbot”. Expectations will be important to handle correctly.

This does not mean the service desk disappears.

It means the human role changes.

Support teams become more valuable where the problem is emotional, ambiguous, high-stakes, or exceptional. They will be measured less on reading the standard answer and more on empathy, judgment, recovery, escalation, and trust repair.

Figure 6. AI can handle standard first-line support efficiently, while human teams become more valuable in emotional, ambiguous, and high-stakes situations.

That shift is already substantial.

But the third wave is larger than support.

The third wave is digitalization itself

We are now approaching a point where advanced models and agentic coding change not only how software is discussed, but how software is continuously created and improved.

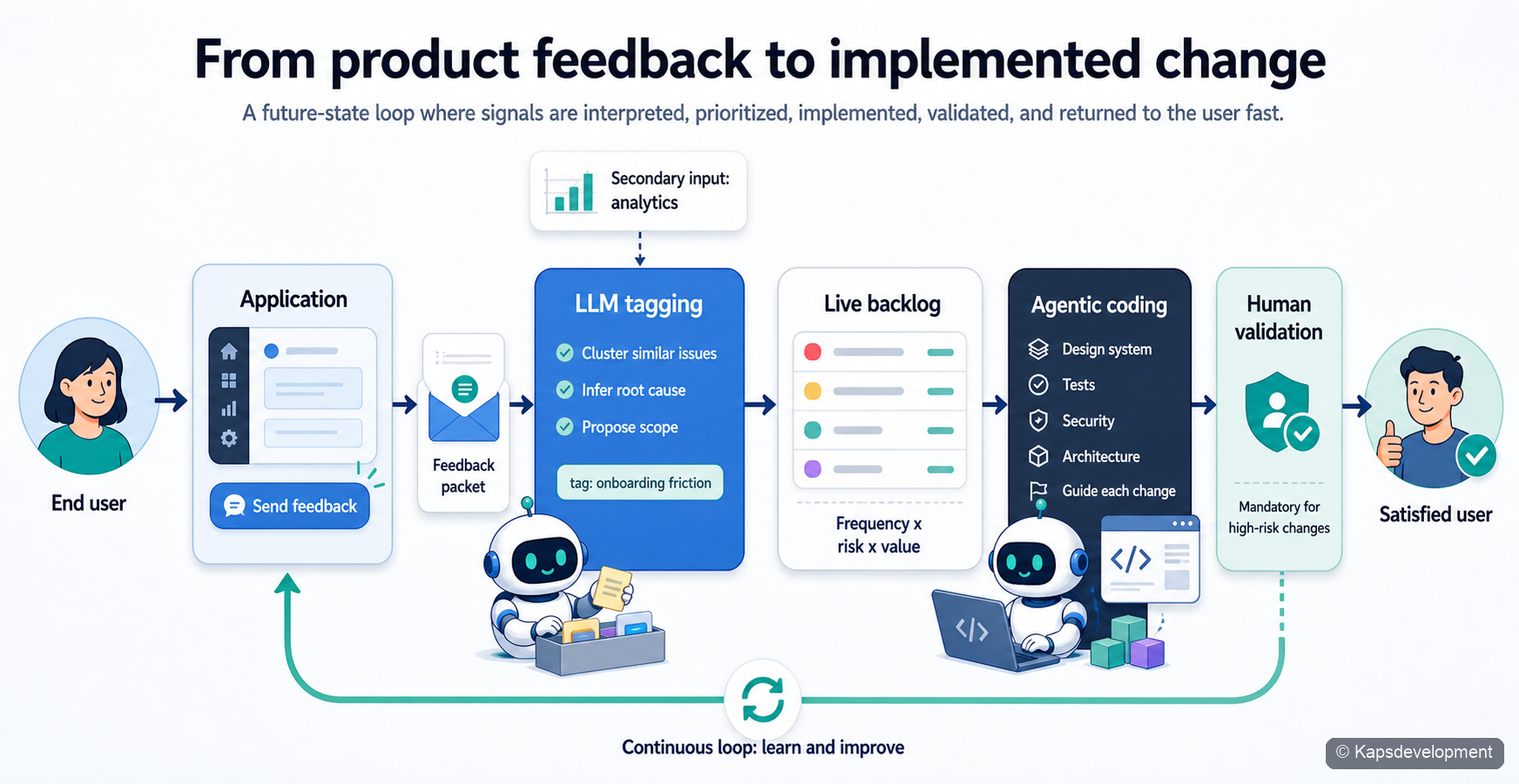

If you already have a digital product with meaningful usage, you can now imagine a loop like this:

- users leave feedback inside the product

- analytics detect friction, hesitation, and repeated failure patterns

- models tag, cluster, and score incoming signals

- a backlog is reprioritized continuously based on business context, observed pain, and frequency

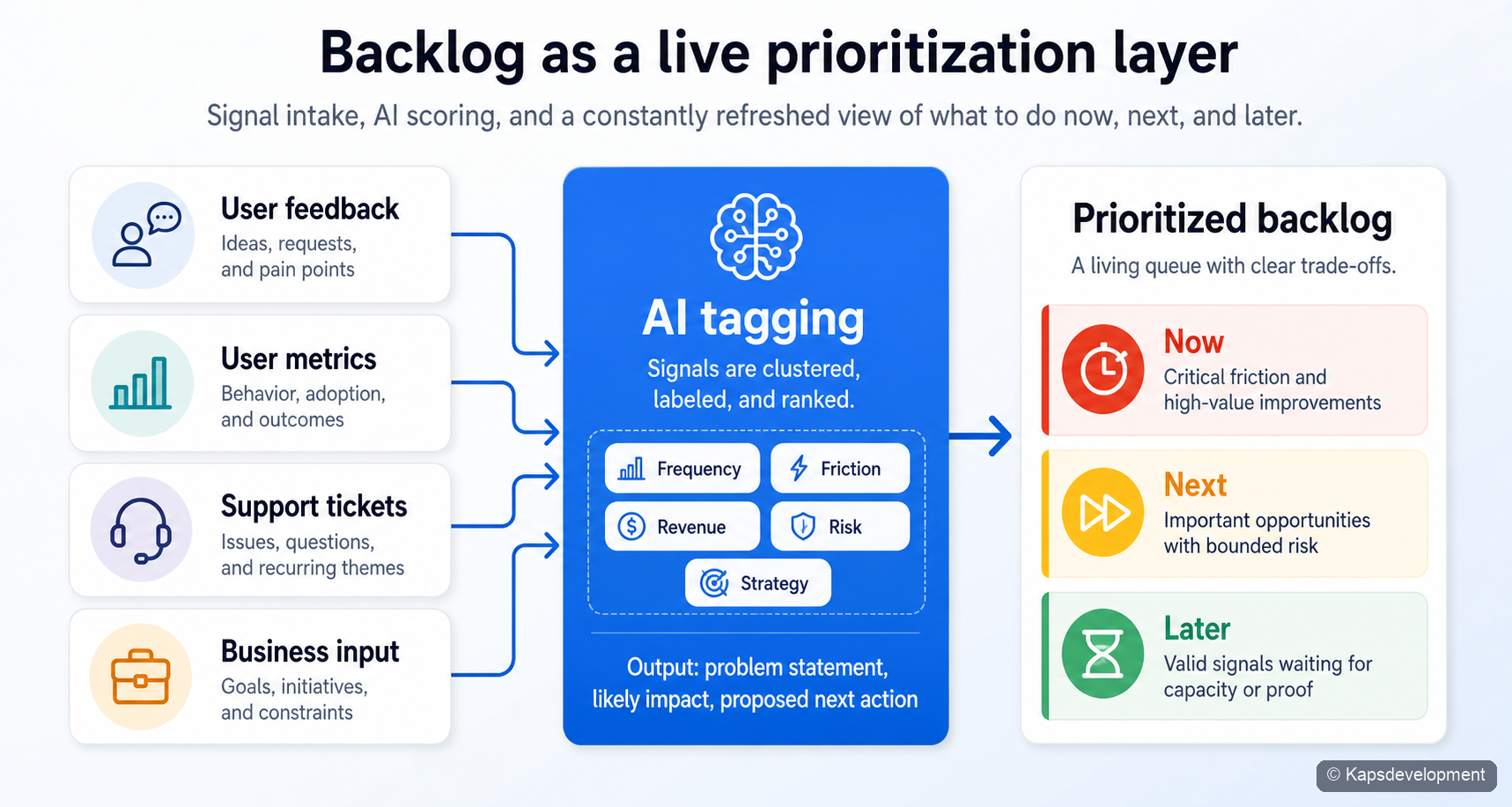

Backlogs can become the practical translation layer between signal and action.

Figure 7. Signals from users, operations, and the business are filtered into an actionable backlog.

This paradigm shift with artificial intelligence in the digital transformation process will not stop there.

We will also see, and should prepare for: - bounded agentic coding implementing changes under strict design and engineering guardrails - humans validate selectively based on risk - the product is redeployed and observed again

That is a very different operating model from the old pattern:

business describes need -> delivery team partly understands it -> something ships late or wrong -> users lose trust -> new meetings begin -> another delivery cycle starts weeks or months later

The emerging model is closer to what I would call living and evolving need coverage.

The product does not wait for the organization to catch up in large batches. It keeps adapting to the need that is already visible in the system.

From feedback to implementation

This is the loop that matters most.

Figure 8. A possible future loop where product signals move from feedback to tagging, prioritization, implementation, validation, release, and renewed user value with very little manual delay.

There are several reasons this becomes plausible now.

First, products can instrument themselves far better than before. They can detect where users click back and forth, where flows stall, where forms are abandoned, where help text is repeatedly opened, and where support requests spike after a release. Previously this had to be analyzed by a human, but LLM´s are highly skilled at analyzing data like this.

Second, models are increasingly strong at interpreting messy human input. Free-text feedback, support conversations, and observed behavior can be turned into structured signals. Instead of a pile of loosely phrased user comments, you can get clustered issues, likely root causes, suggested scopes, and urgency signals.

Third, agentic coding changes the cost of implementation. Once the problem is framed clearly and the rules are well defined, much more of the actual production work can be started automatically. Not all of it. Not blindly. But far more of it than most operating models still assume. Get senior software developers to oversee the process, put in place guardrails and dictate the architecture, and the risk of running something like this greatly reduces.

This is why design systems, architecture constraints, testing standards, infrastructure rules, and security policies becomes critical. The agent should not improvise the shape of the company. It should operate inside a carefully defined space.

With good version control, strong rollback paths, automated test coverage, and disposable runtime environments, it becomes much easier to keep a human in control while still taking advantage of extremely fast implementation.

Are agile, product thinking, and scaled models dead?

Not exactly.

But cutting edge companies are implementing something different really fast.

I do not think agile, lean, or product thinking disappear. I think their center of gravity moves.

Some things become less central:

- heavy manual backlog grooming

- ritualized ticket writing as a translation layer

- repetitive status ceremonies whose main purpose is coordination

- first-line support work that follows a standard answer pattern

- scale frameworks used mainly to move information slowly between humans

Other things become far more central:

- high-quality data

- accessible AI capability across the organization

- secure infrastructure and controlled execution environments

- shared design systems and engineering guardrails

- observability, analytics, and product instrumentation

- auditability, rollback, and human override

- clear ownership of business-critical systems

The scarce resource becomes not coding hours alone, but good framing, good guardrails, and the ability to decide where automation may act and where it must stop.

What large organizations need to invest in now

If this future is even directionally correct, then many large organizations are still focusing too much on the wrong layer.

The strategic advantage will not come only from asking employees to use AI tools in their daily work. That helps, but it is not enough.

The organizations that move fastest will build the underlying conditions for AI-native delivery:

- governed access to data and process context

- well-maintained internal platforms

- strong identity, access control, and secrets handling

- clear software architecture boundaries

- reusable prompt patterns and policy guardrails

- design systems that reduce ambiguity

- evaluation loops for model behavior and code quality

- deployment pipelines with safe rollback and human checkpoints

Once those foundations exist, agentic development starts to democratize digital change. The business side does not need to wait as long for capacity to appear at the center. Needs can be translated, tested, and delivered much closer to where they arise.

That does not remove the need for shared platforms or central oversight.

It makes those central capabilities even more important.

The core systems, the common patterns, the infrastructure, the security model, and the rules for safe execution become the frame that allows distributed delivery to move quickly without becoming dangerous.

The new human role

A lot of the current discussion about AI focuses on replacement. I think the more useful lens is role shift.

Project managers, product managers, developers, designers, support agents, and business specialists are not all disappearing. But many of them will spend less time being manual transport layers between intention and execution.

More of their value will come from:

- setting direction

- shaping constraints

- validating high-risk changes

- handling edge cases and ambiguity

- protecting trust

- improving the systems that generate future changes

- Developers need to “orchestrate more and play the violin a bit less”

In other words, the human role moves upward and outward.

Less manual relay work. More judgment, stewardship, orchestration, and accountability.

A different definition of digital transformation

For a long time, digital transformation meant modernizing the delivery organization.

That work was necessary, and it still matters.

But the next step is different.

Digital transformation is becoming the design of living systems: systems that can sense need, understand signals, generate change, validate risk, and evolve continuously with the organization.

The companies that win in that environment will not be the ones that merely “adopt AI.”

They will be the ones that connect AI to the actual loops where value is created: first response, feedback interpretation, prioritization, implementation, validation, and continuous product evolution.

That is the deeper shift.

Not faster IT.

A new operating model for how digital capability grows with the business itself.